The Policy Picks the Policy

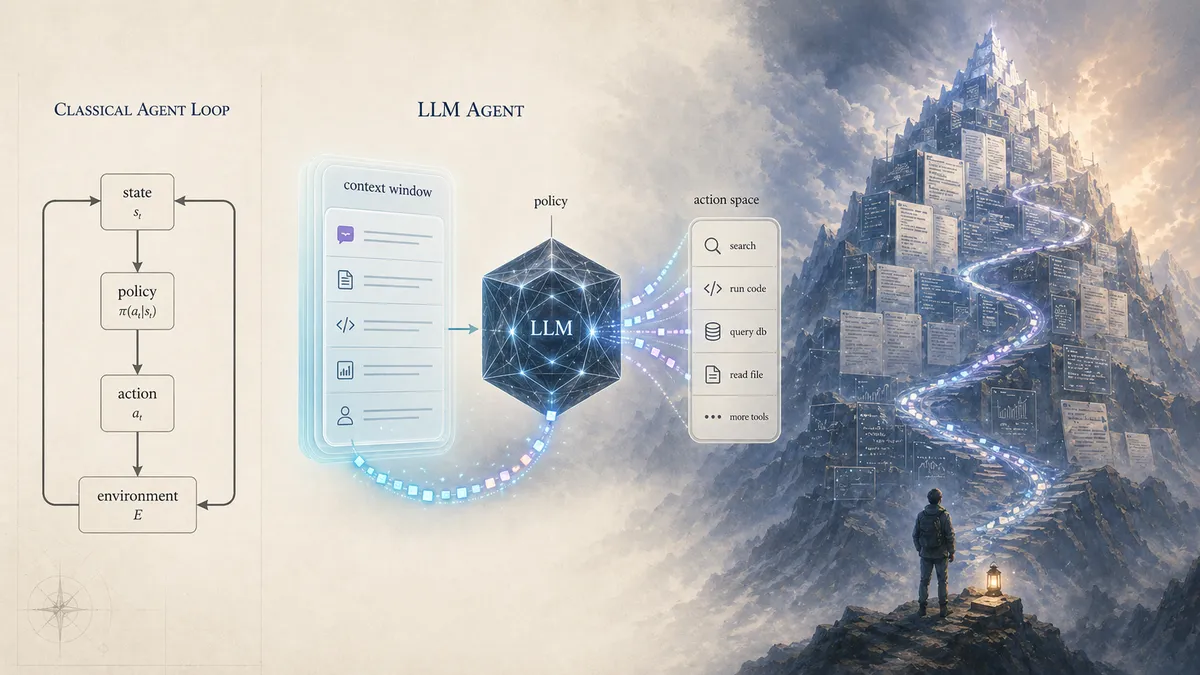

I learned what an agent was in college, almost a decade ago. State, action space, policy. A clean Markov story: observe the state, sample an action from the policy, the environment transitions, observe the new state. Repeat. I filed it away. Useful enough, probably won't need it.

Then around 2025, "agent" came back as the most overused word in tech, and at some point I figured I'd want the formalism again. So a few weeks ago I sat down to do the thing I do when something is getting hyped past my ability to think about it cleanly. I went back to first principles.

Let's map an LLM agent onto the classical definition, I thought. Easy.

Action space: tools. MCPs, function calls, the things the agent can actually do. ✓

State: the context window. That's what the model can see. That's what's giving it direction. ✓

Policy: the LLM itself. Given the state, it picks the action. ✓

Cool. Done.

Wait.

The policy outputs tokens. The tokens are the action — that's what gets sent to the tool. So the action space is... tokens? But I just said the action space was tools.

OK. The model outputs tokens, the tokens describe a tool call, the tool runs, the result comes back. That result goes into the context window. The context window is the state. The state has just been updated by the action.

Fine. Standard MDP.

Except the tokens didn't just describe the tool call. The tokens also updated the context directly. They're part of it now. The model's own output is part of its state on the next turn.

OK, that's autoregression. Still recoverable. The weights are fixed. The policy function is fixed. Different state, different action distribution — that's just π(s') vs π(s). Classical MDPs do that every step.

Except in-context learning is real.

The context window doesn't just tell the model what's true about the world. It changes how the model behaves. Show it three examples of a pattern, it picks up the pattern. Tell it to reason step by step, it reasons step by step. Put it in a role, it acts in role. Same weights, different context, different behavior.

You can still keep the MDP intact if you want. Fold it all into "bigger state, same fixed function," and the formalism survives. But there's a second reading available. If the same weights with different context produce qualitatively different behavior, you can read each context as instantiating a different operative policy. Same function underneath; effectively running as one policy here and another there. It's a frame, not a proof.

Once you put the frame on, what the model is doing looks different. It's not just emitting actions. It's writing the context that selects which policy it effectively becomes on the next call. The model is writing the policy that picks the next token.

Sit with that. We've been standing at the base of a mountain we don't have a name for.

The policy picks the policy.

Every token the model emits does three things at once: it's an action, it's a state update, and through in-context learning, it modifies how the model will behave on the next step. Classical RL keeps these separate. LLM agents collapse them. The thing producing the next action is, in part, produced by the previous action.

This is a strange loop in the Hofstadter sense. A system whose output is part of the rules that produced it. I've been thinking about strange loops since I read the intro to Gödel, Escher, Bach in middle school, and somehow it took me a decade in this field to realize that the most consequential technology of my lifetime sits on top of one and we don't have a clean formalism for it. We've been shipping agents at scale for over a year and we don't know what they are.

That's where this post starts. Because if you sit with that observation for a minute, you start to see why everything in the agent space feels a little janky right now, and why the next two years are going to be about something most people aren't paying attention to.

The empirical workaround

While the formalism was breaking, the engineers shipped anyway. They had to. The current consensus definition of an agent (in practice, in production, in every framework you can install today) is roughly: a loop that calls an LLM until something says stop.

That's it. That's the whole thing. The model decides what to do, the loop runs the action, the result goes back into context, the model decides again. Run it until tests pass, or a budget runs out, or the model says "done."

It works. I use Claude Code every day. I've shipped more code in 2026 than in my whole life combined. Brute force is real.

But brute force is also expensive, opaque, and unbounded. You don't know how many tokens it'll burn. You don't know if it'll find a solution. You don't know what the failure modes look like until you've already paid for them. The labs know this; that's why Claude Code, Codex, all of them cap subagent recursion at one level deep. We can't put real bounds on what these systems will do, so we put crude bounds on how far they can go.

This is the gap. The thing works empirically. The theory hasn't caught up. And every "agent framework" shipping today is a different attempt to paper over the same uncoded foundation.

Two papers, one shape

Two papers from this year, taken together, point at the same shape. The takeaways the field is settling on aren't wrong — they're thinner than what's actually in the papers.

The first is λ-RLM (Roy et al., March 2026). The takeaway most people are landing on is the headline result: an 8B model under λ-RLM beats a 405B model on long-context benchmarks, so the right harness lets small models match big ones. That's true. It's an important data point. It's also a thinner read than the paper itself supports.

What λ-RLM actually does: it gives an LLM a small library of typed primitives (split, map, reduce, filter) and a fixed-point combinator, and asks the model to compose its own procedure for solving the problem. The model isn't using tools. It's not even orchestrating tools. It's building a tool from primitives, then running it. The 8B-beats-405B result is the consequence. The cause is that the model got handed the right substrate to compose on, and the composing is the thing.

The reframe: the model can synthesize procedures from primitives. Tool creation, not tool use. That's the GEB-level unlock. Solve the problem about solving the problem.

The second is GEPA (Agrawal et al., February 2026). Reflective prompt evolution. The system runs trajectories, reflects on them in natural language, proposes prompt updates, tests them, and walks the Pareto frontier of its own attempts. It beats GRPO by 6% on average using 35× fewer rollouts. The headline claim is "natural language is a richer learning signal than scalar rewards." The deeper claim, the one I think matters: the model can sharpen its own tools by looking at what they did.

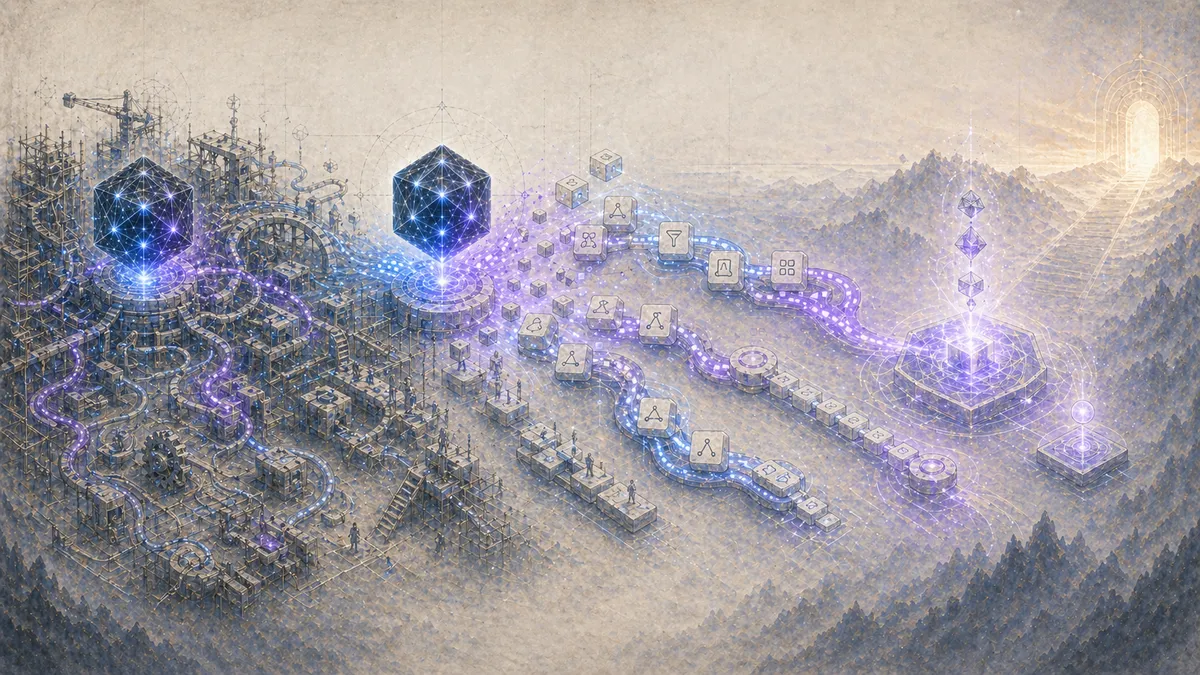

Put λ-RLM and GEPA next to each other and you can see the shape. The model creates tools. The model refines tools. The harness gives it primitives, lets it compose, watches it run, lets it reflect.

The pattern emerging across both papers, across half a dozen other things in the same orbit, across my own work, is: closed-form, bounded, functional, primitive-based. The model cooks on top of a substrate. The substrate is hand-designed. The cooking is where intelligence shows up. That's not anti-bitter-lesson. The bitter lesson applies to capability acquisition, not to the substrate the capability runs on.

I built the same shape independently

A month after λ-RLM dropped, before I read it, I shipped something called fMCP. It's a composition layer for MCP. Instead of an agent making three tool calls across three turns and routing intermediate results through its context window, it submits one typed expression that describes the full dataflow, and the runtime executes it server-side.

It's functional. It's bounded. It's typed. It's first-order. It uses lambda-calculus-shaped primitives (let, return, map). I built it in an evening because I was annoyed at watching agents pass UUIDs through their context window like a game of telephone.

When I read λ-RLM the next week, I sat there for a minute. We landed on the same shape. The same primitives. The same commitments around totality, static analyzability, no general recursion. Two people, working independently, on different layers of the stack (mine for action, theirs for retrieval), converged on the same vocabulary because the shape of the problem forces it.

That's the part that convinced me the shape is right. When the constraint is "the LLM is a strange loop and we need to put bounds on it without killing the cooking," you end up at functional, total, typed, primitive-based composition. Every time.

What's still unsolved

λ-RLM is read-only. It's information retrieval. fMCP is one stab at the action side, but it's a baby compared to λ-RLM. Closed-form recursion is a much bigger result than closed-form composition. The action side, in general, is still wide open. How do you formalize "the agent does things in the world that have consequences" with the same cleanness λ-RLM brings to "the agent reads a long document"?

I don't know. Nobody knows. Action has irreversibility, side effects, context the agent can't fully model. It's a harder problem.

The other open question is the harness itself. Hermes (Nous Research's agent framework) writes its own skills, which is great, except it writes too many of them, redundant ones, slightly-different versions of the same thing. The model needs a meta-skill: a tool that decides whether a new tool is even necessary, that merges duplicates, that garbage-collects dead ones. Coding agents have the same problem one layer up. They reinvent functions instead of importing them, abstract too eagerly, then ignore their own abstractions on the next pass.

This isn't a model problem. It's a harness problem. The model is intelligent enough. The harness isn't pointing it at the right question.

The whole field is at an awkward moment where the model has been ready for a while and the scaffolding is the thing holding everything back. We just don't have the muscle yet for thinking about scaffolding the way we have for thinking about models.

The endgame

Here's the claim I'll plant a flag on.

GPT-3 was AGI by the strict definition. In-context learning is general intelligence. A system that can pick up new skills on the fly, in natural language, with no parameter updates, is general by every definition that existed before LLMs showed up to embarrass them. We moved the goalposts because the system was too cheap, too universal, too easy to dismiss. But by the original criterion, which is the only one that matters, we crossed it years ago.

What we haven't done is unlock it. Intelligence has been sitting in the weights since 2020. The thing standing between you and a model that can do your job isn't the model. It's the harness. The control loop. The substrate the intelligence is allowed to cook on top of.

So here's my bet, on the record:

By the end of 2026, it'll be obvious that closed-form, functional, primitive-based harnesses are where the field is going. The research is already there. The papers are already published. The engineers will catch up. The brute-force agent loops everyone is shipping right now will start crumbling under their own technical debt. They were vibe-coded, they have no formal grounding, and they can't compete with something built on actual primitives.

By the end of 2027, the brute-force-loop agent is dead and the closed-form-harness agent is the default. Not "a thing some teams use." The default. The thing you reach for when you say "I want to build an agent." Same way SQL is the default when you say "I want to query data": closed-form, declarative, bounded, the engine handles the rest.

By 2028 to 2030, we start abstracting the harness away too. Because that's what humans do. We find the primitives, we use the primitives, and then we ask: which primitives are actually load-bearing, and which were scaffolding we needed to get to the load-bearing ones? The minimum viable harness shrinks. The Cambrian explosion of harness designs collapses into a small number of survivors. Some of those survivors get absorbed into the model itself, the way tool use is currently being absorbed.

And then we'll be onto the next thing.

Where I am

I see the shape. I don't have the elegant solution. fMCP is a stab. λ-RLM is a stab. GEPA is a stab. None of them are the answer. All of them are gesturing at it.

The answer, when it lands, is going to look obvious. It's going to take everything we've learned about LLMs, everything from classical AI theory, and the philosophy-of-consciousness intuition that strange loops are not bugs, they're the substrate of cognition, and it's going to compress all of that into something a person can hold in their head.

Until then, I'm building. I'm reading GEB. I'm watching the field. I'm writing fMCP and stress-testing it on real workloads. I'm betting that the harness is the next era, that we're in the last stretch of the LLM scaling story, and that the people who figure out the right shape first get to define what "agent" means for the next decade.

The policy is picking the policy. That's the strange loop. The harness is what we wrap around it so the strange loop does useful work.

We're early. Get cooking.